|

|

The Lambda Calculus in NotepadSubmitted by Michael L Perry on Mon, 11/23/2015 - 23:47

The Lambda Calculus is a system of logic created by Alonzo Church in 1929 to express theorems in a minimal system with a consistent set of rules. The Lambda Calculus is sufficient to compute any mathematical formula that we can evaluate, or to run any program that we could give a computer. It can even run its own rules. And it does it all with search and replace; functions readily executed in Notepad. In this talk, we explore computer language design from the perspective of The Lambda Calculus. We build up a system of computation, data structures, and algorithms. And we do it all in Notepad! Attached is the output of the first presentation of this talk, at the Dallas Functional Programmers. November 23, 2015. Jinaga - A universal back-end for single page web applicationsSubmitted by Michael L Perry on Sat, 10/31/2015 - 17:05

When you're working on a web application, you want to keep your head in the Angular/Knockout/Ember, CSS, and HTML. You want to keep thinking about the front end. But then you get to a point where you need to save some data, record a user action, or query to update the page. And so you stop what you are doing and switch to the back end. Advantages over 3-tierThe three-tier style of creating web applications leads you to create a back-end MVC controller for each kind of resource that the app might want to save. The controller transforms it into a document or a set of relational rows and saves it to the database. Then another controller (or at least another method) queries based on parameters, reads that data back out of the database, and translates it into the JSON that the front end requires. For each new application feature, you need to touch all three tiers: front-end, back-end, and database. A universal back end cuts out all that extra work. The front end simply creates a JSON document and saves it, all from client code. Then it queries for data again using JSON, and the universal back end does all the work. For each new application feature, you just write front-end code. No (application-specific) Back EndThere are several universal back ends (Firebase, Hoodie, Parse, and others listed at nobackend.org). Jinaga is different. It uses immutable facts to ensure that changes are easy to distribute across several devices. Immutability makes conflict detection and resolution simple. Another advantage is that Jinaga is open source. Many of the above backends-as-a-service are commercial products. You host your data on their servers and pay them a monthly fee. But Jinaga is not a product. You install it on your own server and host your own data. Simple APIThere are only two functions that you will call from your front-end code. First, call fact in order to save some data: j.fact({

type: "Task",

list: {

type: "TodoList",

name: "Chores"

},

description: "Take out the trash"

});This records the fact that there is a task with the description "Take out the trash". Furthermore, it relates this fact to the list with the name "Chores". We can now call watch to query for all tasks within that list: function tasksInList(l) { return { type: "Task", list: l }; } var chores = { type: "TodoList", name: "Chores" }; function taskAdded(task) { // Render the task in the UI } j.watch(chores, [tasksInList], taskAdded); This will query for all tasks currently in the list, and add them all to the UI. But it doesn't stop there. It will continue adding tasks to the UI as the user enters more: it will call taskAdded whenever fact is called. And one more thing. It might be called on another browser. That works, too. There's one single API function for loading, refreshing, and synchronizing data. Get StartedTo get started using Jinaga, install Mongo, Node, and Bower for your platform. Then get the NPM and Bower packages for Jinaga, and write your very own universal back end: var JinagaDistributor = require("jinaga/jinaga.distributor.server"); var MongoProvider = require("jinaga/jinaga.mongo"); var url = 'mongodb://localhost:27017/dev'; var port = 8888; var mongo = new MongoProvider(url); JinagaDistributor.listen(mongo, mongo, port); Start the app and connect from the front end: var j = new Jinaga(); j.sync(new JinagaDistributor("ws://localhost:8888/")); That's the last time you'll need to touch the back end. Turing's KeyboardSubmitted by Michael L Perry on Mon, 10/05/2015 - 23:14

Alan Turing set out to prove that some problems are undecidable. Not that they don't have a solution -- Kurt Godel proved that several years earlier. No, Turing proved that you cannot even decide whether or not they have a solution. To do so, he created a keyboard. On this keyboard were symbols that represented operations that a machine could perform. A programmer would sit at this keyboard and enter a string of symbols onto a tape, leaving spaces in between for a Universal Turing Machine to use as a workspace. The Universal Turing Machine was capable of executing any program that the programmer was clever enough to enter using the keyboard that Turing provided. Now enter an observer. The observer would like to know whether the program meets some set of requirements. Take a simple requirement: that the program print a zero. Sure, it would be easy to write a program that satisfies this requirement, but the observer doesn't get to write the program. He is simply given a program, and he has to decide whether or not it prints a zero. The programmer could type anything at all on Turing's keyboard. If the programmer typed "Print 0", then the observer would declare the program correct. If the programmer typed "Print 1", then he could declare it incorrect. But he could just as easily type convoluted branching logic with a "Print 0" embedded within. If the observer were unable to follow the branching logic to completion, he may find it difficult to discover whether the embedded statement is ever executed. Turing showed that there are some programs that could be written, where the observer would not be able to decide. Decidable ProgramsSo if we cannot even prove this simple assertion about an arbitrary program, what hope have we of more interesting assertions? How can we prove, for example, that a banking program does not create or destroy money? How can we prove that a communication program opens a connection before sending a message? In short, how can we prove that our software has no bugs? There is only one way. We need only take Turing's keyboard away from the programmer. Let us not allow him to enter any arbitrary sequence of instructions. Instead, let's confine him to a small set of transforms. He must start with an empty program. Then, to achieve a single requirement, he must transform the program by following a constrained set of rules. He must transform the program step-by-step into a more complex construction that eventually solves all given requirements. At each point along the path, the program is verifiable. We never let the programmer stray into the space where the program is undecidable. There are certainly some programs that cannot be written this way. But if we are careful in our selection of transforms, then perhaps we can write most of the interesting ones. A Solution to the Punch 22 ChallengeSubmitted by Michael L Perry on Fri, 01/09/2015 - 10:23

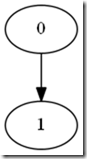

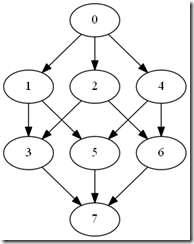

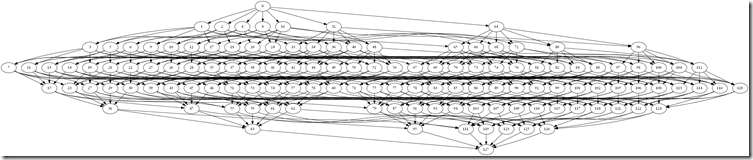

The Punch 22 Challenge is to find a 7-bit encoding of the 26 letters of the English alphabet that can be punched on a paper tape … twice. That is, after the tape has been used once with this encoding, it can be fed into a combination reader/writer machine that will punch additional holes to change to a different letter. The first thing we notice about this encoding is that it has to have more than one hole pattern assigned to a letter. On the first write, with an unused tape, we write one pattern. On the second write, we choose a different pattern based on the holes that are already punched. I’ll let you read the original article for a detailed description of the problem. What follows here is an analysis of the problem and then, if you scroll to the end, the solution. If you want to solve it yourself, stop reading now. Tape costs more than peopleApparently, paper is really expensive. I am embarrassed to admit how much time I’ve spent on this problem, but I’m sure you could buy quite a lot of paper tape with my hourly rate for that duration. Nevertheless, once a problem is solved, the solution can be scaled. This may be an academic problem, but others like it have lead to some real advancements in our industry. Huffman Coding. Compression. Reliable magnetic storage. At its heart, this is an Information Theory problem. How much information is in two punches of a tape? How can we compress all valid permutations of two characters down to 7 bits? How much of the information about the first character is retained after the second character is punched? And can you reconstruct with some probability the original message that was first written to the tape? I love Information Theory, so this problem had me at the start. ReachabilityThe problem is really one of reachability of nodes in a directed acyclic graph. When we punch a hole, we can go forward, but we can’t go back. If you had 3 bits, they would form a graph that looks a little like a cube. The graph of the full 7 bits looks a bit more complex. But the concept is the same no matter how big or small the graph. Each of the numbers represents a symbol that we could punch on the tape. The arrows point to all the symbols that we can reach by punching one more hole. We can construct the set of all symbols reachable from a single starting point. These are all the symbols that I could punch over the starting symbol. Suppose I had the symbol 42. This is the binary 0101010. It has three holes punched. From there, I could reach the following symbols: This tells me that if there was a 42 on the tape, then I could only punch the 16 symbols that are reachable from that node. That’s key. The initial symbolsLet’s review the problem. We need to create an encoding that allows the tape to be reused once. The encoding assigns a letter to each of the 128 possible symbols that I could punch. So what are the rules by which we will use this encoding? When the machine needs to punch a letter, it first reads what’s already there. If this is the first time using the tape, then no holes are punched; it’s a zero. In that case, it will punch the initial symbol for the letter. The second time through, each cell is going to contain one of the initial symbols. If I want to punch a different letter, then it has to be reachable from that initial symbol. If the current symbol already represents the letter, then you don’t need to change it. You have no idea what letter is coming next, so at least one symbol for every letter has to be reachable from each symbol in the initial set. That means that 42 cannot be an initial symbol. I can only reach 16 symbols from 42 (including 42 itself). By the pigeon-hole principle, I cannot assign 26 letters to 16 symbols. Looking back at the graph, we can see that the symbol at the top – zero – can reach the entire graph. At the second tier, we have symbols with one hole punched. They can reach only those symbols that have that hole punched, which is half of them. The third tier has two holes punched. It cuts reachability in half again. 42 is in the fourth tier, with three holes punched, so it has cut the number in half three times. Each tier can reach half of what the prior tier could. Let’s label the tiers and count how many symbols are in each tier, and how much of the graph they can reach.

Since we need to assign distinct letters to the symbols reachable from every initial symbol, they must all be able to reach at least 26 symbols. All of the initial symbols must be in one of the first three tiers: P0, P1, or P2. Lower tiers cannot reach enough symbols. And since we have to assign 26 initial symbols, we have to consume the first three tiers with only 3 slots left. There are a total of 29 symbols available for us to choose. That means that at least 18 of the initial symbols are in P2. Parceling the alphabetNow the challenge becomes assigning letters to the remaining tiers to be sure that the alphabet is covered from every symbol in P2. The initial symbol at P2 can reach itself, but the remaining 25 letters need to be accounted for in the lower tiers. If we were to solve the problem for P2, then P0 and P1 would be solved as well. P0 has only one symbol – zero, and it can already reach the entire graph. P1 has 7 symbols. Each one can reach 6 symbols in P2 by punching one of the remaining holes. If any one these 6 symbols can reach the entire alphabet, then so can the initial symbol in P1. Remember that we have at least 18 initial symbols in P2. How do we reach the alphabet from each of those 18 initial symbols? We can flip the problem, and instead ask how we can parcel out the 26 letters so that at least one symbol for each letter is reachable from all 18 of the initial symbols in P2. Let’s identify 26 sets of symbols that can be reached from at least 18 P2s. The first set is easy. It contains only the symbol 127, or binary 1111111. The entire graph can reach this symbol. Unfortunately, there is only of those, and we still have 25 letters to assign. Then we can construct a set based on the P6s. These are the symbols with 6 ones, such as 126, or binary 1111110. The initial symbols with a one in the final position cannot reach this symbol, so we have to add symbols that they can reach. Each of these P2s has one other bit set, so we need to choose symbols that cover all possible pairs of bits. Let’s draw those symbols from the other sets. Here’s an example: P6: 1111110 P4: 0001111 P4: 1110001 This set consumes one P6 and two P4s. We only have 7 P6s and 35 P4s to go around, so if we define 7 such sets, we’ve spent all of our P6s and 14 of our P4s. Let’s see if we can find a set that is less expensive. Again starting with a P6 and a P4, we can subdivide the remaining bits like this. P6: 1111110 P4: 0001111 P3: 0110001 P2: 1000001 This takes more symbols, but we’ve spread the cost out a bit more. We can still only have 7 of these, since the P6s are the limiting factor, but they leave a lot more of the P4s for other sets to use. This set uses a P2, which will be the initial symbol for the letter to which it is assigned. I’ll let you go through the exercise of identifying the sets that can be reached from the P2s. As you do, you can break them down by their cost in terms of symbols from each tier. This is what you will find:

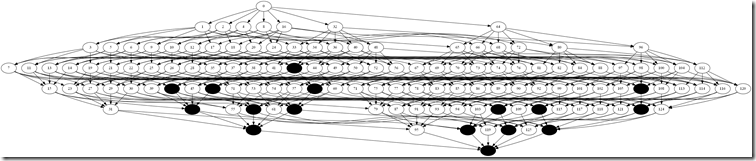

The set S6 is interesting. It consumes 2 P2s. One of those P2s will be the initial symbol for the letter to which it is assigned. The second will be one of the 3 remaining P2s that are not initial symbols. So there can only be at most 3 letters in S6. The solutionUpdate: The following is incorrect. Stefan Stenzel (@5tenzel) showed me a solution to this problem, which let me reverse-engineer my mistake. See if you can spot it. Select the hidden text at the bottom of the post to reveal the solution. And now we have enough information to solve the problem. All we need to do is determine how many of each set we can assign to the 26 letters of our alphabet. We can compute the cost in terms of symbols in each of the tiers P2 through P7. We know exactly how many of those symbols we have. So this is just an optimization problem. The optimal way to assign those sets is: 1S1, 7S3, 10S4, 4S5, and 3S6. This consumes 1P7, 7P6, 17P5, 34P4, 35P3, and 13P2. We have no more P3s left. We could trade an S5 for an S4 to get two more P3s, but then we have no more P4s left. But this is only 25 sets. 1 + 7 + 10 + 4 + 3 = 25. We don’t have enough sets to assign to all 26 letters. The pigeon-hole principle strikes again. So the solution is a non-solution. This is an impossible problem. Proving that it was impossible was difficult in itself. But it has provided us some value. For example, we know that we are likely to find a solution with a 25 letter alphabet. And we are likely to find a solution with an 8 hole tape. We won’t waste any time searching for a solution where we know that none can exist. This type of proof – that a solution does not exist – has been extremely important in the history of computer science. I refer you to the Gödel Incompleteness Theorem, Church and Turing on the Entscheidungsproblem, Turing’s Halting Problem, and P vs NP. The mistakeThe mistake was that the P2s that are not initial symbols are not consumed by a set. I made this mistake twice. First, I claimed that the P2 in a set must be the initial symbol of the assigned letter. That was wrong: it could be a leftover P2. Second, I claimed that there could be no more that 3 sets containing 2 P2s. That is incorrect because the leftover P2s can appear in any number of sets. To make matters worse, I did not create a complete table of sets. I gave up on combinations that I assumed would consume too many symbols. Complete the table and allow the leftovers to be reused, and you get plenty of wiggle room to allow a solution. Stefan’s solution had the following sets:

Which very neatly consumes 1P7, 7P6, 21P5, 35P4, and 30P3. The P2s are not all consumed, because many of them are the leftovers, reused from one set to the next. Sefan’s solution is: ABCIDKMEEOQBSYTLFUWTYBGCTPDQNVWJGZ-YXGLZRJUVNDRPNWJXCFVKRZOMEISHH--SVTIUPDKRHSGNLYIWOMLRJKFECOZXJRZBJEYOXTICKWQFHSUNTQPDVLYGMUBA Create a dependency map of SQL tables from foreign key constraintsSubmitted by Michael L Perry on Tue, 12/23/2014 - 12:09

A great tool for analyzing relationships is GraphViz. Install it and open up GvEdit. Now you can enter a text representation of a directed graph:

Run the graph generator and it produces a nice visual representation. The first line -- rankdir = BT -- tells GraphViz to rank items from bottom to top as it lays out dependencies. This makes all arrows point up. If you ever find an arrow pointing down, then that is part of a cycle. To analyze a SQL schema, set your SQL output to text and run this query:

Then copy the text output and paste into GvEdit. Now you have an overview of your SQL data model with related tables clustered together, and the high-level entities at the top. Toward Pattern Matching in C#Submitted by Michael L Perry on Mon, 10/27/2014 - 14:50

A powerful tool in many functional programming languages is called "pattern matching". It is a concise syntax for expressing conditions based on the shape of a value. For example, in Haskell, the factorial function can be expressed as a pattern: factorial :: (Integral a) => a -> a factorial 0 = 1 factorial n = n * factorial (n - 1) Learn You a Haskell: Syntax in Functions Often the pattern is expressed in terms of the type of the value. For example, Maybe is a type that can either be Nothing or Just some value. applyMaybe :: Maybe a -> (a -> Maybe b) -> Maybe b applyMaybe Nothing f = Nothing applyMaybe (Just x) f = f x Learn You a Haskell: A Fistful of Monads Notice that the pattern extracts the parts of the value, and gives you names for those parts. Toward patterns in C#So what would patterns look like in C#? Here's a start. I'm not sure where I will take this, if anywhere. Say we wanted to return one of two things from a function, based on whether it was successful or not. private Pattern<int, Error> ParseInteger(string s, string description)

{

int value;

if (!int.TryParse(s, out value))

return new Error(new List<string>

{

String.Format("Invalid value for {0}: {1}.", description, s)

});

else

return value;

}

We define a Pattern class taking generic parameters indicating the types that could be returned. To make it possible to return the types directly as written above, we define implicit operators. public abstract class Pattern<TOption1, TOption2>

{

public static implicit operator Pattern<TOption1, TOption2>(TOption1 value)

{

return new Match<TOption1, TOption1, TOption2>(value);

}

public static implicit operator Pattern<TOption1, TOption2>(TOption2 value)

{

return new Match<TOption2, TOption1, TOption2>(value);

}

}

Here we create a Match type, which inherits Pattern to represent one of the two options. class Match<TValue, TOption1, TOption2> : Pattern<TOption1, TOption2>

{

private readonly TValue _value;

internal Match(TValue value)

{

_value = value;

}

}

Unfortunately, there is no way in C# to express the constraint that TValue is either TOption1 or TOption2. However, that constraint is implicit in the way that a Match is constructed. By making this class private and its constructor internal, we ensure that only classes in the assembly (i.e. Pattern) can create a Match. Selecting a path based on the patternNow that we have the Pattern class, and can construct a Match to satisfy the pattern, we can select our response. For example, we could do the following: ParseInteger(s, "iteration count")

.If<int>(i => _configuration.IterationCount = i)

.If<Error>(HandleError)

Here HandleError is a method taking an Error object. We can define the If method in Pattern as follows: public abstract class Pattern<TOption1, TOption2>

{

public abstract Pattern<TOption1, TOption2> If<T>(Action<T> action);

}

Again, we can't express the generic constraint that T is one of TOption1 or TOption2. And this time, we don't have any context that enforces that relationship. We just need to trust that the consumer of this API wouldn't write something that would never match. class Match<TValue, TOption1, TOption2> : Pattern<TOption1, TOption2>

{

public override Pattern<TOption1, TOption2> If<T>(Action<T> action)

{

if (typeof(T) == typeof(TValue))

{

action((T)(object)_value);

return Matched;

}

return this;

}

}

The C# compiler could actually expand the generic parameters at compile time and come up with a constant for the condition. That would allow it to optimize this method body to one of the two branches. I don't think it actually does, but it could be measured. The Matched object returned when the condition is matched is a simple flyweight that always fails an If. class Matched<TOption1, TOption2> : Pattern<TOption1, TOption2>

{

public override Pattern<TOption1, TOption2> If<T>(Action<T> action)

{

return this;

}

}

The flyweight is instantiated in Pattern. public abstract class Pattern<TOption1, TOption2>

{

protected static readonly Pattern<TOption1, TOption2> Matched = new Matched<TOption1, TOption2>();

}

Future directionsTo make this Pattern class generally useful, it needs to be expanded in a number of ways. First, the number of options must be made flexible. It should support three, four, or possibly even just one option. This could be done through nesting (Pattern<T1, Pattern<T2, T3>>). Second, the If method should also support conditions. In Haskell, these are called guard clauses. It isn't just the type of the expression that matters, it's also the value. Third, there should be an Else method that is called if none of the If methods match. This should be easy to construct. Fourth, the If method should take Func<T, TResult>, and not just Action<T>. You should be able to use pattern matching within an expression. Quite possibly, the expression would return yet more Pattern types based on the types of all of its results, so that patterns could be further composed. And fifth, we should be able to concisely express an action to be taken at any point within a composition. For example, the HandleError method used above should be called on any path where Error is encountered. There are almost certainly more ways that this concept can be expanded. Do you have any ideas you would like to try? Three-Way Data Binding in Universal Apps: View, View Model, and CloudSubmitted by Michael L Perry on Sun, 10/05/2014 - 11:36

XAML gives us two-way data binding: view to view model. But how do you synchronize data between phones and tablets? Learn how to data bind to the cloud. When a change is made on one device, the change appears on another. Data flows seamlessly across devices. But those devices aren't always connected. And even when they are, users don't want to wait for a network connection before they can see or work with their data. So data needs to be stored locally, and synchronized in the background. Build a universal app where the phone and tablet share a common data model. That model is synchronized through a cloud service and into local storage. Project that storage through the view model, and data bind it to the view. Presented at Dallas TechFest 2014, DFW Mobile .NET User Group Q.E.D. Code Podcast, Episode 1: PredicatesSubmitted by Michael L Perry on Sat, 09/06/2014 - 21:23

In the inaugural episode of the Q.E.D. Code Podcast, we introduce the concept of using predicate calculus to prove the correctness of software. Listen to the show at http://qedcode.libsyn.com. PredicatesA predicate is a statement that has a truth value. It is either true or false. Predicates can be simple statements, or they can be build up using operators. The “implies” operator is particularly important to software, because it allows us to tie the state of the system to the line of code being executed. The application of predicates to proving software correctness is covered in detail in Bertrand Meyer’s book Object Oriented Software Construction. He defines a system called Design by Contract. We follow predicates through lines of code until we reach a method. There, it is up to us to prove that it is correct to call the method at that time. We do so by validating the preconditions of the method. These become predicates that the method can apply to the first line of its code, and follow through its execution. When a method is finished, it asserts some postconditions. These become predicates that we as the caller can use to assert preconditions of later method calls. RefactoringAlgebra teaches us methods of rewriting equations to derive a solution to a problem. Refactoring is a similar process done on code. Martin Fowler formalized the process in his book Refactoring: Improving the Design of Existing Code. Refactorings are modifications to code that do not change its behavior. He provides systematic ways of applying these changes, in a way that gives us confidence that we do not break the system along the way. The opposite of a refactoring is a transformation. This is a change that modifies the behavior of the code. Robert C. Martin has organized them in the Transformation Priority Premise, which favors simpler changes over more complex ones. If we apply changes in this order, he reasons, we will make smaller changes and end up with simpler code. John von NeumannBorn in Budapest Hungary in 1903, John von Neumann was a mathematical prodigy. He contributed to a broad range of subjects, including algebra, set theory, and economics. He even defined whole new lines of study, most significantly of game theory. Game theory tells us how players will respond in non-cooperative scenarios. The simplest of scenarios is a zero-sum game of perfect information. These are games like tic-tac-toe, Connect Four, and chess, where all of the information is available to all players at all times. And for one player to win, the other must lose. But his theory expands to more than just the chess board. Games can have non-zero sums and imperfect information. These types of games arise in business negotiations, and during war time. John von Neumann applied game theory to some very difficult problems in these areas, and even coined the phrase Mutually Assured Destruction (MAD). An example of the application of game theory to war time can be found in the 1983 movie War Games. His contributions to the field of computing were no less revolutionary. He brought the idea of stored programs to the designs of the ENIAC and EDVAC computers. Before this, computers were constructed to solve a specific problem. They had their instructions hard wired into their processing units. But von Neumann designed a machine code that could be stored in the computer’s memory. This allowed the computer to be reprogrammed to solve new problems. Now all digital computers that we use today employ the von Neumann architecture. Cryptography FundamentalsSubmitted by Michael L Perry on Thu, 03/20/2014 - 21:06

One of the most direct applications of mathematics to software is cryptography. The mathematics of cryptography give us confidence that messages are authentic and confidential. How does that work? Information TheoryModern digital encryption is founded on the work of mathematician Claude E. Shannon. He taught us how to measure how much information is in a message. This allows us to correct errors in transmission, compress files, and encrypt messages. He measured information in bits. A bit, just as in a digital computer, is a unit that can be in one of two states. If your language only allows you to say two things (say it only contains the words “car” and “derrigible”) then a statement only has one bit of information in it. You chose one or the other. It doesn’t matter how long the words are. If you can choose one of four statements, then your language has 2 bits. One of 8, it has 3 bits. So what if you could choose from a possible set of 10 things to say? In other words, how many bits of information are in a decimal digit? Simple: log2 10 = 3.32 bits. Languages that are highly redundant have fewer bits of information than the actual text used to express them. For example, in English, a letter “q” is usually followed by a “u”. That means that the “u” is largely redundant. Even though it takes another log2 26 = 4.7 bits of information to write the additional letter, it doesn’t contribute much more information to the message. The ratio of information in a message as compared to the length of the message is its relative entropy. 1 minus entropy is redundancy. The English language contains about 50% entropy, 50% redundancy. That’s why English text can be compressed to about 50% of its original size. When we compress, we squeeze out redundancy. The message gets close to having a 1:1 entropy ratio. And when we transmit a message over a noisy channel, we can add redundancy to make it possible to reconstruct the message on the other side if parts of it get scrambled. But what about encryption? Well, to encrypt a message, you want to combine the information from two different channels. You need to do this in such a way that it is difficult to separate the information once its combined. The two channels that we’ll combine are the key and the plaintext. They mix together to form the ciphertext. Information theory tells us how difficult it will be to separate the two again, assuming that you don’t already have one or the other (the key or the plaintext). To securely encrypt a message, we have to spread the information in both the key and the message evenly throughout the ciphertext. So a small change in the key leads to a large change in the ciphertext. And a small change in the plaintext also leads to a large change in the ciphertext. Spreading the key is called confusion. Spreading the plaintext is called diffusion. The algorithms that we’ve designed for symmetric encryption do a good job of both confusion and diffusion. AES, the Advanced Encryption Standard, is a good example. Inverse FunctionsSymmetric keys have to be exchanged to be useful. They have to be moved. And in so moving, they may be compromised. So how can we exchange symmetric keys with someone over a potentially unsecure network? And how can we be sure of the identity of the recipient? What about the identity of the sender? To solve these problems, we can use inverse functions. Suppose that I make up two functions, f(x) and f-1(x). If I give you the first, but keep the inverse for myself, then you can challenge me. You make up a random number, and run it through f(x). They you give me the answer and ask me what was your random number. Only someone who knows f-1(x) could tell you. And I haven’t shared f-1(x) with anybody, so if I get it right it must be me. You can do the same thing to send me a secret message. Just encrypt it with a symmetric key, then run the symmetric key through f(x) and send me the answer. It doesn’t matter if an eavesdropper intercepts it. They don’t know f-1(x). So only I can get back to the key for the message. Ah, you say. But what if I replaced the correct message with a fake? Well, I’ve got you there, too. You see, the sender also has a pair of inverse functions, g(x) and g-1(x). I know g(x). He used g-1(x) on a hash of the message and sent that, too. That’s called the signature. So I can hash the message I receive, and run his signature through g(x). If I come up with the same number, then I know the message must have come from him. You can find out more about the mathematics and mechanics of modern digital encryption at http://cryptofundamentals.com. The end of null reference exceptionsSubmitted by Michael L Perry on Tue, 04/16/2013 - 11:13

Or get the model and application in one project:

Null objectsVersion 2.0 introduces null objects. Instead of returning null, the Correspondence API will now return an instance with the IsNull property set to true. You no longer have to write code like this: if (_sessionPlace.Place == null || _sessionPlace.Place.Room == null || String.IsNullOrEmpty(_sessionPlace.Place.Room.RoomNumber.Value)) return null; return "Room: " + _sessionPlace.Place.Room.RoomNumber.Value; Now you can eliminate all of those null checks. You will not get a null reference exception! Object references from null objects will be other null objects. So you can string references together without worry. Native properties of null objects will be null or zero. So you can rewrite the above code as: string roomNumber = _sessionPlace.Place.Room.RoomNumber.Value; return String.IsNullOrEmpty(roomNumber) ? null : "Room: " + roomNumber; API changesI labeled this version 2.0 because it is technically a breaking change. If your code checks for null, that check will no longer be valid. Instead, you should check for one of the following new properties:

All references are loaded asynchronously, so IsLoaded will initially be false. When it finishes loading, it will become true and your view model will reevaluate the expression. You can just write your view models normally, and you will get the benefit of asynchronous loading. Outside of a view model getter, you may need to ensure that a predecessor or property is loaded before continuing. You can use these methods to do just that:

Finally, the FindFact method gets an upgrade. Not only does it return a null object if the fact is not found, it also has become observable. You can call FindFact in a view model. At first, it will return an IsLoaded = false object. Then, if the fact has not yet been created, it will change to an IsNull = false object. Finally, when the matching fact is added, it will return that fact. For each of these changes, your view model will notify property changed. The upshot is that you can write view models that depend upon facts that have not yet been created. Slot slot = _community.FindFact(new Slot(_attendee, _sessionPlace.Place.PlaceTime)); if (slot.CurrentSchedules.Any()) return "Scheduled"; else return "Not scheduled"; At first there is no Slot fact for the sessions time slot. So FindFact returns a null object, and CurrentSchedules will be empty. When the user schedules the session, the app will create both the Slot fact and the subsequent Schedule fact. That will cause the view model property to change, FindFact will return the actual Slot, and CurrentSchedules will contain the new scheduled session. The FindFactAsync method is deprecated. Use FindFact().EnsureAsync() instead. Please try out the new null object support in Correspondence 2.0. You will need to update your code to remove null checks and replace them with the IsNull property where necessary. But for the most part, you can just chain references together, and you will get the desired result. And you will not get a null reference exception! |

In the very same stroke wherein he created the computer program, the architect declared that it cannot be verified.

In the very same stroke wherein he created the computer program, the architect declared that it cannot be verified.