|

|

Exclusive end datesSubmitted by Michael L Perry on Tue, 03/13/2012 - 12:35

They all have one thing in common. They specify an expiration data inclusively. Usually, this just makes things hard. Sometimes it leads to real problems. Azure 2012John Petersen wrote up a good summary of the leap day Azure outage. In it, he explains clearly why the simple solution of AddYears(1) is not enough. Azure experienced an outage because one certificate was valid through February 28, 2012, while the next was valid starting March 1, 2012. The code failed to account for leap day. As John explains, simply using proper date logic instead of string manipulation will still not account for the extra day. Tiny gapsCode that checks for times between 12:00 am and 11:59:59 pm fails to account for the last second of the day. I’ve seen people include milliseconds in that check. While this fills in the gap to the precision of the clock, it makes a bold assumption. Times are often stored using floating-point values, which are subject to round-off error. You are assuming that you will always round into the valid range instead of out to the tiny gap. Consecutive rangesWhenever software needs to handle a date or time range, it’s usually because something else is going to happen in the next consecutive range. We could be starting the next fiscal year, rolling over to the next log file, or enabling the next certificate. When ranges are specified using inclusive end times, the start time for the next consecutive range is not equal to the end time of the previous one. At a minimum, this makes the calculation of consecutive ranges more complex than it needs to be. You need to add or subtract a day, a second, or a millisecond, based on the precision of your clock. At worst, this allows for gaps. They might be tiny. Or they might be a complete leap-day. Inclusive start, exclusive endThe simplest solution is to specify date ranges with an inclusive start and an exclusive end. In mathematics, this is written as “[)”, as in “[5/1/2011, 5/1/2012)”. In code, it’s just >= and <. This has the advantages of simplifying consecutive range calculations and filling the gaps. The beginning of the next consecutive range is equal to the end of the current one. You don’t have to think about it. There is no need to add or subtract days. You don’t need to know the precision of your clock. There is no gap between < and >=. These operators are opposites. If you compare two values - even floating-point values - with one, you will always get the opposite answer as you would with the other. It is unambiguous which side of the line a value falls, as long as one side is inclusive and the other is exclusive. People don’t usually think in terms of exclusive ends. If you read “Sale ends Saturday” only to find that items were full price Saturday morning, you’d be upset. So translate exclusive ends into inclusive ones when you present them to the user. But always store and calculate date ranges with inclusive start dates and exclusive end dates. KnockoutCSSubmitted by Michael L Perry on Wed, 03/07/2012 - 10:07

KnockoutJS is a JavaScript dependency tracking library that enables MVVM in the browser. I took the syntax and simplicity of KnockoutJS and ported it to C#. Thus was born KnockoutCS. Simple data bindingLike any pattern, MVVM is not appropriate in all situations. Sometimes an application is just too simple for an MVVM framework. But at what point do you make the switch? KnockoutCS fits into the gap between Hello World and Composite Enterprise Application. Start by defining a data model. Use virtual properties to give KnockoutCS the ability to insert its dependency tracking hooks. 1: public class Model 2: {3: public virtual string FirstName { get; set; } 4: public virtual string LastName { get; set; } 5: }When you construct this model, use the KO.NewObservable method. Then use KO.ApplyBindings to bind it to your view. 1: private void MainPage_Loaded(object sender, RoutedEventArgs e) 2: { 3: Model model = KO.NewObservable<Model>();4: DataContext = KO.ApplyBindings(model, new { }); 5: }You now have INotifyPropertyChanged behavior injected into your model. This is your basic Hello World starting point. Computed propertiesThat little anonymous object “new { }” is your gateway to more interesting behavior. Insert a computed property using the KO.Computed method. 1: private void MainPage_Loaded(object sender, RoutedEventArgs e) 2: { 3: Model model = KO.NewObservable<Model>();4: DataContext = KO.ApplyBindings(model, new 5: {6: FullName = KO.Computed(() => model.FirstName + " " + model.LastName) 7: }); 8: }Now you can data bind to the computed property. It will automatically update (and fire PropertyChanged) when one of its observable properties changes. QueriesAny data model is going to have a collection. Declare these as properties of type IList. There is no need to make these virtual. 1: public class Parent 2: {3: public IList<Child> Children { get; set; } 4: }Then when you bind the model, you can inject a computed property using a linq query. 1: private void MainPage_Loaded(object sender, RoutedEventArgs e) 2: { 3: Parent parent = KO.NewObservable<Parent>();4: DataContext = KO.ApplyBindings(parent, new 5: { 6: Children = KO.Computed(() =>7: from child in parent.Children 8: orderby child.Age9: select new ChildSummary(child) 10: ) 11: }); 12: }This query will depend not only upon the source collection, but also upon any observable properties used to order or filter it. CommandsWhen you data bind to the Command property of a button, you provide a delegate that will be called when that button is clicked. And you can also provide a lambda that dictates when that button is enabled. 1: private void MainPage_Loaded(object sender, RoutedEventArgs e) 2: { 3: PhoneBook phoneBook = KO.NewObservable<PhoneBook>(); 4: PhoneBookSelection selection = KO.NewObservable<PhoneBookSelection>();5: DataContext = KO.ApplyBindings(phoneBook, new 6: { 7: DeletePerson = KO.Command(() => 8: { 9: phoneBook.People.Remove(selection.SelectedPerson);10: selection.SelectedPerson = null; 11: }, () => selection.SelectedPerson != null 12: ) 13: }); 14: }Since this lambda references the property of an observable, the button will enable and disable as the user changes that property. There is more to KnockoutCS. Try it out and see if you like it. Right now it only works for Silverlight 5, but I can easily port it to Silverlight 4 or the full WPF stack. I’m still working out how to port it to Windows Phone and WinRT. Historical Modeling makes offline data analysis easySubmitted by Michael L Perry on Mon, 02/06/2012 - 18:20

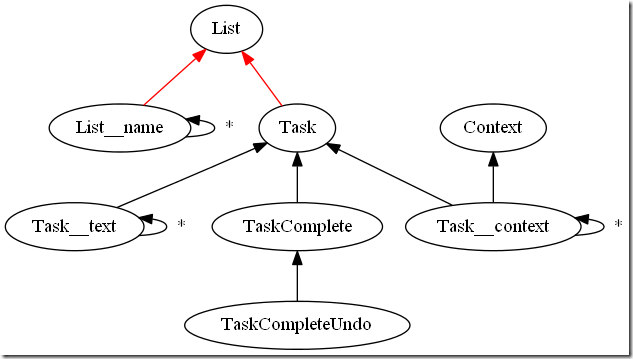

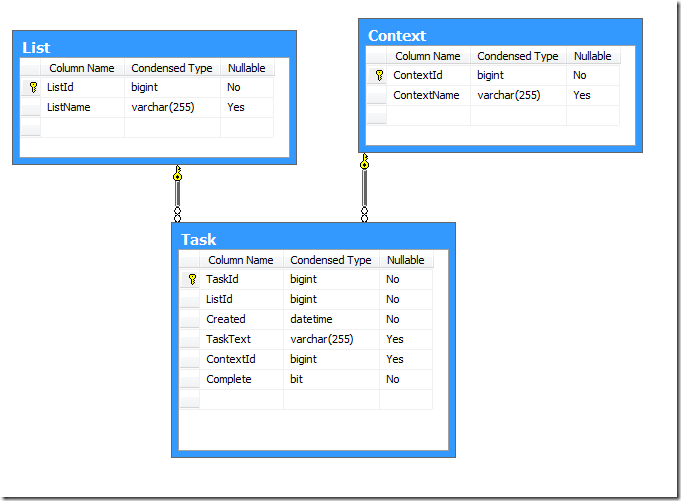

Because I built my Windows Phone application using Historical Modeling, I have been able to easily export user data to an analytical database. This is giving me insight into the way the application is used, and will lead to an improved user experience. The HoneyDo List historical modelThe HoneyDo List Windows Phone application was built using Correspondence, a collaboration framework based on the principle of Historical Modeling. Historical Modeling captures a history of facts, each one a decision made by a user. HoneyDo List defines facts like:

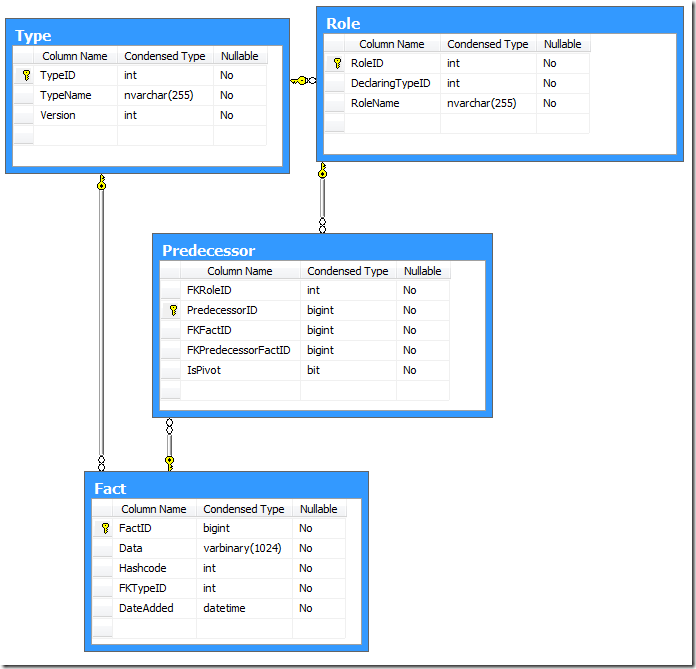

Although the names of some of these facts are nouns, it is important to remember that these are not entities or objects. They are facts that capture the creation of these objects. By convention, we just drop the verb “Create” because it would get very wordy and confusing. The relationships among these facts form a tree. Each of the arrows points to a predecessor of a fact. Think of this like a foreign key relationship. The red arrows are special. They indicate that the successor is published to its predecessor. Anybody subscribing to a List will see all of the related facts. This is what makes Correspondence a good collaboration framework. The analytical databaseMy task was to analyze how the app was being used, so I can decide what features should be in the next version. The historical model captures a history of facts. This history is a detailed account of application usage. All I needed to do was to mine this data. Correspondence was designed to make it easy to synchronize data across different data stores. Each client points to a distribution node, which publishes facts so that the clients can subscribe to the ones they care about. It has built-in support for several storage mechanisms, including isolated storage on the phone and SQL Server on the desktop. My first step in constructing an analytical database was to plug in the SQL Server storage mechanism and point it at the distribution node. The SQL database that Correspondence creates is not suitable for data mining. It stores all facts in a single table, regardless of type. All of the fields are serialized to a binary column within that table. It is impossible to query such a structure for any useful analytics. So the next step was to design a separate database with an application-specific schema. This database has separate tables for lists and tasks. The data loaderThe final step was to populate the analytical database from the historical database. This is where things really got fun. Take a look at each of the facts in the historical model. It is a record of a decision that the user made. We can turn each fact into an insert, update, or delete operation. This is what the user would have done had they been working directly against the analytical database. We just write a method that maps each fact to the appropriate SQL. 1: public void Transform(CorrespondenceFact nextFact, FactID nextFactId, Func<CorrespondenceFact, FactID> idOfFact) 2: {3: using (var session = new Session(_connectionString)) 4: { 5: session.BeginTransaction();6: if (nextFact is List) 7: {8: Console.WriteLine(String.Format("Insert list {0}", nextFactId.key)); 9: InsertList(session, nextFactId.key); 10: }11: if (nextFact is List__name) 12: {13: List__name listName = nextFact as List__name; 14: Console.WriteLine(String.Format("Update list name {0} {1}", idOfFact(listName.List).key, listName.Value)); 15: UpdateList(session, idOfFact(listName.List).key, listName.Value); 16: }17: //... 18: UpdateTimestamp(session, nextFactId); 19: session.Commit(); 20: } 21: LastFactId = nextFactId; 22: } 23: 24: private static void InsertList(Session session, long listId) 25: { 26: session.Command.CommandType = CommandType.Text;27: session.Command.CommandText = "INSERT INTO List (ListId) VALUES (@ListId)"; 28: session.ClearParameters();29: session.AddParameter("@ListId", listId); 30: session.Command.ExecuteNonQuery(); 31: } 32: 33: private static void UpdateList(Session session, long listId, string listName) 34: { 35: session.Command.CommandType = CommandType.Text;36: session.Command.CommandText = "UPDATE List SET ListName = @ListName WHERE ListId = @ListId"; 37: session.ClearParameters();38: session.AddParameter("@ListId", listId); 39: session.AddParameter("@ListName", listName); 40: session.Command.ExecuteNonQuery(); 41: }The analytical database keeps the ID of the last fact that it processed. It updates this ID with each change. Both of these database operations are performed within the same transaction, so there is no danger of missing or duplicating a fact. It’s like using a transactional queue without the overhead of the distributed transaction coordinator. The analytical database uses fact IDs as primary keys, rather than defining its own auto increment key. For the foreign key relationships to be valid, the parent record must be inserted before the child. Fortunately, Correspondence ensures that this is always the case. Predecessors are always handled before their successors. Future analysisNow that I have HoneyDo List data loaded into an analytical database, I can see exactly how people are using the system. I have a few features planned, and I will get those deployed to the Marketplace very soon. When the new version is out there, I’m sure that I will come up with more analytics to capture. For example, how many people are sharing lists with their family? How frequently does the average user add a task, or mark it completed? As I come up with new analytics, I will need to modify the database. After each modification, I’ll simply drop all of the data and replay it from the historical model. Historical modeling has made it easy for me to separate the analytics from the collaborative application. The app doesn’t have to operate against the analytical database. But I can see the data as if it did, because I can tap into the history and replay it offline. HoneyDo List for Windows 8Submitted by Michael L Perry on Sun, 01/08/2012 - 14:47

I took the last two weeks of 2011 off work. During that time, I ported Correspondence to Windows 8, published HoneyDo List for Windows Phone, and submitted an entry for the First Apps contest. Here is a demo of HoneyDo List running on a Windows 8 table that Chris Rajczi generously loaned me.

HoneyDo List uses Zebra Crossing to generate and recognize QR codes. These codes contain a unique ID to which both devices can publish and subscribe. The tablet publishes all of its lists to the ID, and subscribes for updates. The phone, upon scanning the ID, subscribes to receive all of the tablet’s lists, and then publishes the ones that the user selects. This two-way collaboration continues after the lists are exchanged. Both devices subscribe to the shared lists. New tasks published on one device will be received on the other. It is my hope that the story of Correspondence – synchronizing facts among all your devices via publish/subscribe – will align with the story of Windows 8 – access to all of your applications and data wherever you happen to be. If so, I expect this to be the start of an exciting opportunity. Awaitable critical sectionSubmitted by Michael L Perry on Thu, 01/05/2012 - 17:01

.NET 4.5 introduces the async and await keywords. In the very near future (Windows 8, WinRT), most API functions will be asynchronous. Code that you wrote using synchronous APIs will no longer work. For example, suppose you used to write to a file like this: 1: lock (this) 2: { 3: FileHandle file = FileHandle.Open();4: file.Write(value); 5: file.Close(); 6: }Now you have to write to it using the asynchronous API. Just changing it to this won’t work: 1: lock (this) 2: { 3: FileHandle file = await FileHandle.OpenAsync();4: await file.WriteAsync(value); 5: file.Close(); 6: }This code fails to compile with the error “The 'await' operator cannot be used in the body of a lock statement”. So you get rid of the lock: 1: // BAD CODE! Multiple writes allowed. 2: FileHandle file = await FileHandle.OpenAsync();3: await file.WriteAsync(value); 4: file.Close();And now it works most of the time. But every once in a while, you get two writes to the same file. If you’re lucky, this will just result in an exception like “The process cannot access the file because it is being used by another process”. If you aren’t, this will corrupt your file. The solutionSo how do you protect a shared resource, such as a file, without blocking the thread? Enter the AwaitableCriticalSection. The call to EnterAsync() will only return for one caller at a time. It returns an IDisposable, so once you leave the using block, it will allow the next caller to enter. Threads are not blocked, but only one caller can be in the critical section at a time. 1: using (var section = await _criticalSection.EnterAsync()) 2: { 3: FileHandle file = await FileHandle.OpenAsync();4: await file.WriteAsync(value); 5: file.Close(); 6: }Download the source code and add it to your project. Feel free to change the namespace. How it worksThe AwaitableCriticalSection keeps a collection of Tokens. Each Token represents one call to EnterAsync(). A token implements IAsyncResult. It’s basically just a wrapper around a ManualResetEvent, which can be signaled when it’s your turn to enter the critical section. Think of a Token as a “take-a-number” ticket, or a restaurant pager. AwaitableCriticalSection is the dispenser, or the hostess. When you call EnterAsync(), it dispenses a Token to you. At the same time, it places this token in a queue. If there is no one else in the critical section at that moment, then your token is instantly signaled. It passes true to the Signal method so that CompletedSynchronously will be set. This causes await to continue without relinquishing the thread. 1: public Task<IDisposable> EnterAsync() 2: {3: lock (this) 4: {5: Token token = new Token(); 6: _tokens.Enqueue(token);7: if (!_busy) 8: {9: _busy = true; 10: _tokens.Dequeue().Signal(true); 11: }12: return Task.Factory.FromAsync(token, result => _disposable); 13: } 14: }Await will open up the async result, calling the lambda expression that returns _disposable. This is simply an object that calls Exit when you leave the using block. 1: private void Exit() 2: {3: lock (this) 4: {5: if (_tokens.Any()) 6: {7: _tokens.Dequeue().Signal(false); 8: }9: else 10: {11: _busy = false; 12: } 13: } 14: }Exit signals the next token in line. This time, it passes false to let you know it didn’t complete synchronously. It had to wait. If there is nobody left in line, then it sets _busy to false. There is nobody in the critical section. Locks block threads. New operating system APIs won’t allow you to block threads. So use an awaitable critical section to protect shared resources while maintaining a fast and fluid UI. HoneyDo Windows Phone applicationSubmitted by Michael L Perry on Mon, 12/26/2011 - 19:11

The Windows Phone version of the HoneyDo List app is finished and has been submitted to the Marketplace. Here’s a demo of the list sharing feature.

Next I’ll start on the Windows 8 version. The phone app will make a good companion to the desktop or tablet app. HoneyDo List Web ApplicationSubmitted by Michael L Perry on Tue, 12/20/2011 - 18:15

The multi-platform HoneyDo List application starts with the web. This version of the application, hosted on App Harbor, let’s you share lists between devices using your phone’s camera. Create a list in your browser, then share it via QR code with your phone. The lists will be kept in sync. Here’s a demo.

My next step is to get the Windows Phone companion app reading these QR codes and sharing lists. Once that’s submitted to the Marketplace, I’ll start working on the Windows 8 app. The Correspondence unpublish featureSubmitted by Michael L Perry on Tue, 12/20/2011 - 15:33

Correspondence queues don’t work like your typical queues. A subscriber sees the entire history of facts that were published to the queue. Retrieving a fact does not remove it from the queue. While this is a good thing for synchronization among multiple parties, it does have one drawback. When you first encounter a queue, you have to suffer through all of history before you get to the facts that you are really interested in. To eliminate this problem, I added a feature to Correspondence that allows it to unpublish a fact. When the fact is no longer important, it does not appear in the queue. Here’s a demonstration of the feature. Watch live streaming video from qedcode at livestream.com

To unpublish a fact, simply define a where clause in the publish statement. The where clause needs to use a predicate directly on the published fact. fact Task {

key:

publish ListContents listContents

where not this.isComplete;

query:

bool isComplete {

exists TaskComplete c : c.task = this

where not c.isUndone

}

}A Task will only be published to ListContents as long as it is not complete. Once the task is marked completed, it will be unpublished. People subscribing to the list later will not see any of the completed tasks. Windows 8 Metro and companion Phone app in two weeksSubmitted by Michael L Perry on Fri, 12/16/2011 - 10:58

Microsoft is sponsoring a Windows 8 app building contest. Entries are due January 8. I have two weeks off for Christmas and New Years. So I know what I’ll be doing. For the next two weeks, I will keep regular office hours. By the end of the two weeks, I will have a HoneyDo List Metro application and a companion Windows Phone application. I’m starting with:

My tasks are:

If I have time left over, I’ll spend it styling the web application. Follow my progress on Twitter. I will also post regular video demonstrations on this blog. ComputingSubmitted by Michael L Perry on Sat, 12/03/2011 - 09:02

Grady Booch is creating a TV series called Computing, and he needs your help. I want to donate $50 to the project, but you can help me to give more. Here's what you do:

For the first 5 tests, I will donate $10 each. For the next 10, $5 each. Then $1 for each test thereafter up to a total of $500. All entries must be in by December 20, at which time I will post the best tests. Our industry needs this documentary. Let's make it happen. |